Over the past two years, my work has increasingly revolved around a single, persistent question: how do we integrate artificial intelligence into education without weakening the very human capacities we are trying to develop?

This question did not emerge in abstraction. It emerged from real projects. From leading AI policy development for Catholic schools under the MaPSA AI Integration Framework and Policy Guidelines. From designing AI Readiness assessment domains for institutions. From building GabAI as a teacher-centered AI assistant rather than a shortcut engine. From delivering national and international talks on ethical AI use, academic integrity, and cognitive overreliance. From training faculty on prompt engineering, AI literacy, and research applications. From confronting the misuse of AI detectors and the false certainty they create. From coaching schools aspiring toward deeper, more principled digital maturity.

Across these projects and engagements, one pattern became clear. Many institutions are treating AI as an adoption problem. It is not. AI is not another device rollout. It is not a new learning management system. It is not merely a pedagogical enhancement tool. AI is a cognitive technology. This distinction changes the unit of analysis.

The Core Premise: Augmentation Within Ethical Governance

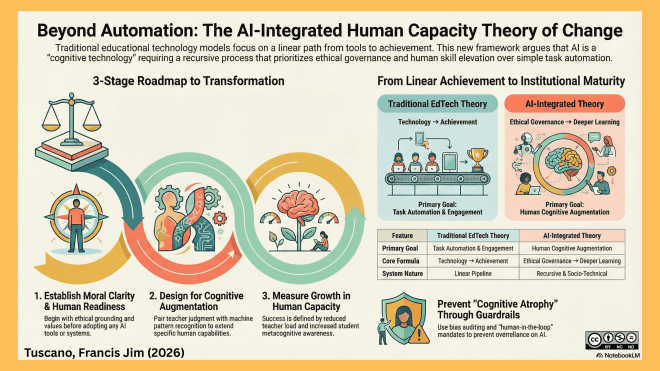

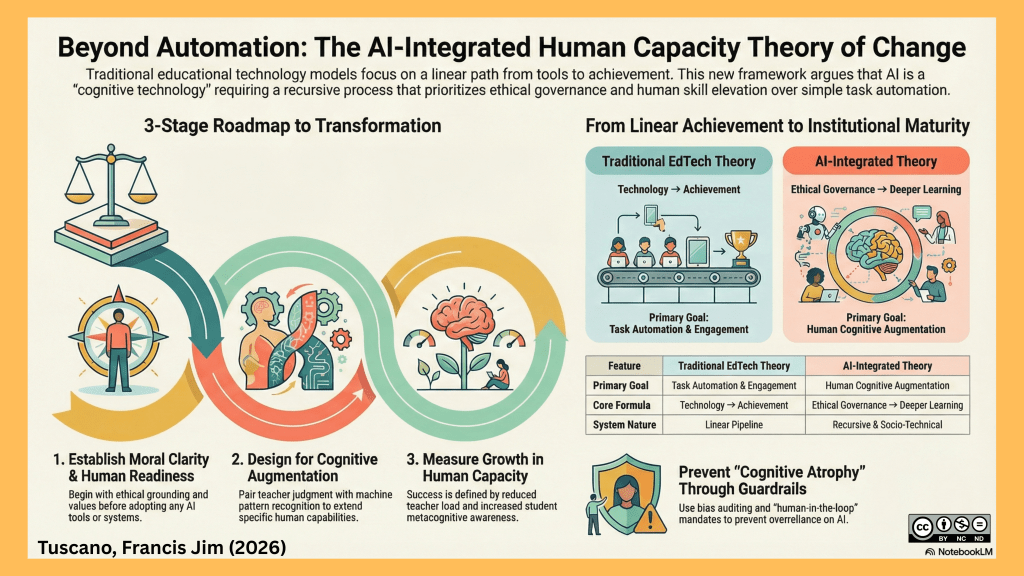

Traditional educational theory of change follows a linear pipeline: inputs lead to activities, activities lead to outputs, outputs generate outcomes, and outcomes produce impact. This structure assumes that humans remain the sole decision-makers and knowledge producers within the system.

AI disrupts that assumption. The moment we introduce a non-human cognitive partner into instructional design, assessment feedback, research writing, policy drafting, and governance processes, the system becomes socio-technical. Human judgment now operates in interaction with machine-generated pattern recognition, synthesis, and prediction.

Through my recent work in AI policy drafting, teacher AI literacy frameworks, readiness diagnostics, and the development of GabAI as a guided augmentation platform, I have become convinced that we need a more rigorous model. What I am developing is an AI-Integrated Human Capacity Theory of Change.

The core premise is simple but demanding: sustainable transformation in the age of AI occurs not when AI automates tasks, but when AI augments human cognitive capacity within ethically governed systems.

When schools ask how to “use AI more,” the better question is: which human capacity are we strengthening? In an AI Readiness Toolkit that I am almost done developing, I structured domains around leadership and governance, teacher AI literacy, student ethical maturity, instructional systems integrity, and data protection. The organizing principle was never tool adoption. It was capacity elevation.

- The first stage of the theory is human readiness and moral clarity. In leading AI policy conversations, particularly in educational institutions, I found that institutions must first define their ethical posture. What values do we need to keep and make present all the time? What ethical considerations are guiding us in AI use? What are our disclosure norms? What constitutes appropriate AI assistance? Where is human oversight mandatory? What risks are acceptable? Without clarity at this stage, adoption becomes performative or fragmented.

- The second stage is intentional cognitive augmentation design. In building GabAI, my co-founders and I at Tagros Education deliberately avoided positioning it as an answer generator. Instead, we designed prompt cards to support instructional planning, formative feedback, differentiation, and reflection. The guiding rule was clear: teacher judgment must remain central. AI supports pattern recognition and idea generation; it does not replace pedagogical reasoning. If an AI function cannot be mapped to a clearly defined human capability it enhances, it should not be implemented.

- The third stage is workflow redesign. Across faculty trainings, one recurring observation emerged: AI does not simply change tasks; it reshapes processes. Lesson planning becomes iterative refinement rather than blank-page drafting. Assessment shifts from grading to feedback amplification. Research moves from information retrieval to synthesis evaluation and source validation. If institutions maintain old workflows while inserting AI tools, they create noise, not transformation.

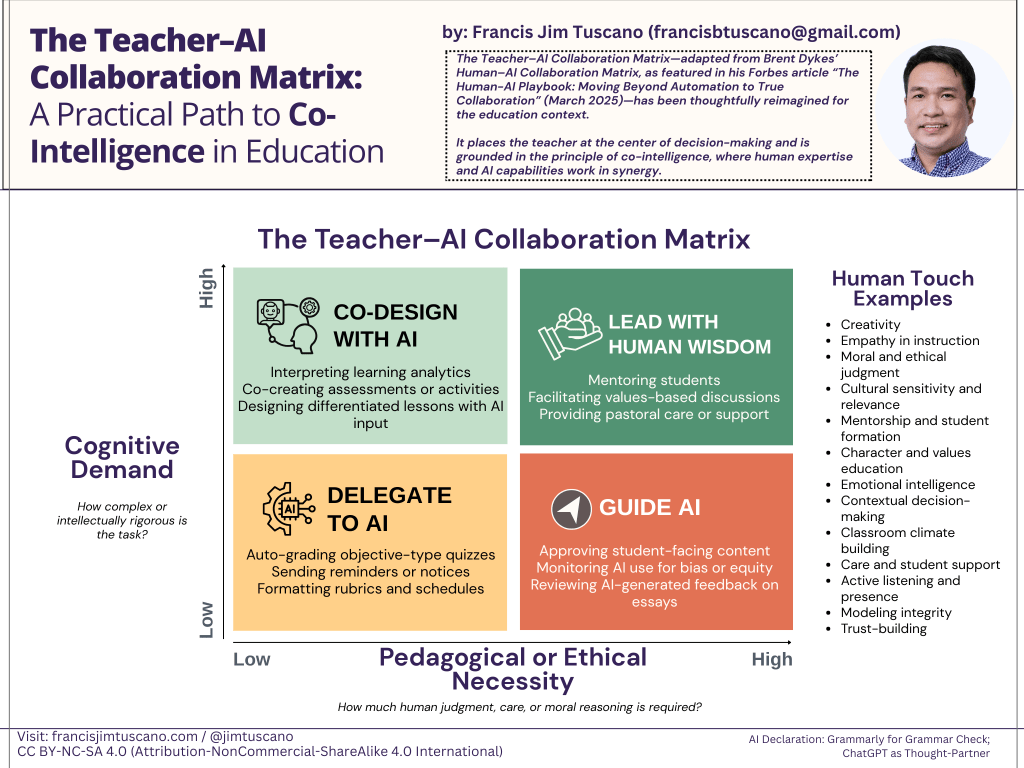

- The fourth stage is human skill elevation. As AI handles lower-level production tasks, professional effort must move upward. Critical thinking, ethical reasoning, interdisciplinary design, metacognition, and relationship-building become more central. In multiple leadership sessions, I have argued that the real risk of AI in education is not automation. It is the quiet erosion of thinking. The antidote is not prohibition, but deliberate skill elevation. It is also worth-noting here that I have been proposing an AI-Human Collaboration Matrix where I argued that it’s ok to even say, “No, at this point, AI won’t be used.”

- The fifth stage is system-level reinforcement. Policy development, professional learning, governance structures, and metrics must align. In the MaPSA AI policy initiative, reinforcement mechanisms were embedded into domains covering teacher use, student use, governance, and student services. Without structural reinforcement, AI integration regresses into novelty or misuse.

- The sixth and final stage is measurable human impact. Across consulting engagements, I have consistently pushed institutions to move beyond usage statistics. The relevant metrics are reductions in unnecessary cognitive load, improvements in instructional quality, growth in student metacognitive awareness, strengthened equity indicators, and deepened ethical literacy. If human capacity is not increasing, the system is not improving.

Confronting the Shadow Side

Through real cases of AI misuse, overdependence, and premature accusations driven by unreliable detection tools, I have observed how easily integration can become degenerative. Unchecked AI use can produce cognitive outsourcing and skill atrophy. Therefore, any serious theory of change must embed protective mechanisms: AI literacy development, disclosure norms, human-in-the-loop mandates, bias auditing protocols, and safeguards against overreliance.

Conceptually, this work draws from socio-technical systems theory, adoption dynamics research, instructional knowledge frameworks, and international AI competency standards. Practically, it is grounded in lived implementation: policy drafting, system diagnostics, professional development design, product development through GabAI, and institutional coaching across diverse educational contexts.

At this stage, the AI-Integrated Human Capacity Theory of Change remains under refinement. The next phase is formalizing causal pathways, recursive feedback loops, and maturity rubrics that resist performative adoption. However, the direction is clear, that is, AI integration is not a technology problem, bu a governance and human capacity problem.

The institutions that mature in this era will not be those that deploy AI most aggressively. They will be those that design ethically, elevate human judgment intentionally, and measure growth in cognitive and moral capacity with discipline. This framework is my attempt to articulate that path with precision and integrity.